How outrage became the fastest currency in politics—and why the virtues of patience are disappearing.

By Michael Cummins, Editor | October 23, 2025

In an age where political power moves at the speed of code, outrage has become the most efficient form of communication. From an Athenian demagogue to modern AI strategists, the art of acceleration has replaced the patience once practiced by Baker, Dole, and Lincoln—and the Republic is paying the price.

In a server farm outside Phoenix, a machine listens. It does not understand Cleon, but it recognizes his rhythm—the spikes in engagement, the cadence of outrage, the heat signature of grievance. The air is cold, the light a steady pulse of blue LEDs blinking like distant lighthouses of reason, guarding a sea of noise. If the Pnyx was powered by lungs, the modern assembly runs on lithium and code.

The machine doesn’t merely listen; it categorizes. Each tremor of emotion becomes data, each complaint a metric. It assigns every trauma a vulnerability score, every fury a probability of spread. It extracts the gold of anger from the dross of human experience, leaving behind a purified substance: engagement. Its intelligence is not empathy but efficiency. It knows which words burn faster, which phrases detonate best. The heat it studies is human, but the process is cold as quartz.

Every hour, terabytes of grievance are harvested, tagged, and rebroadcast as strategy. Somewhere in the hum of cooling fans, democracy is being recalibrated.

The Athenian Assembly was never quiet. On clear afternoons, the shouts carried down from the Pnyx, a stone amphitheater that served as both parliament and marketplace of emotion. Citizens packed the terraces—farmers with olive oil still on their hands, sailors smelling of the sea, merchants craning for a view—and waited for someone to stir them. When Cleon rose to speak, the sound changed. Thucydides called him “the most violent of the citizens,” which was meant as condemnation but functioned as a review. Cleon had discovered what every modern strategist now understands: volume is velocity.

He was a wealthy tanner who rebranded himself as a man of the people. His speeches were blunt, rapid, full of performative rage. He interrupted, mocked, demanded applause. The philosophers who preferred quiet dialectic despised him, yet Cleon understood the new attention graph of the polis. He was running an A/B test on collective fury, watching which insults drew cheers and which silences signaled fatigue. Democracy, still young, had built its first algorithm without realizing it. The Republican Party, twenty-four centuries later, would perfect the technique.

Grievance was his software. After the death of Pericles, plague and war had shaken Athens; optimism curdled into resentment. Cleon gave that resentment a face. He blamed the aristocracy for cowardice, the generals for betrayal, the thinkers for weakness. “They talk while you bleed,” he shouted. The crowd obeyed. He promised not prosperity but vengeance—the clean arithmetic of rage. The crowd was his analytics; the roar his data visualization. Why deliberate when you can demand? Why reason when you can roar?

The brain recognizes threat before comprehension. Cognitive scientists have measured it: forty milliseconds separate the perception of danger from understanding. Cleon had no need for neuroscience; he could feel the instant heat of outrage and knew it would always outrun reflection. Two millennia later, the same principle drives our political networks. The algorithm optimizes for outrage because outrage performs. Reaction is revenue. The machine doesn’t care about truth; it cares about tempo. The crowd has become infinite, and the Pnyx has become the feed.

The Mytilenean debate proved the cost of speed. When a rebellious island surrendered, Cleon demanded that every man be executed, every woman enslaved. His rival Diodotus urged mercy. The Assembly, inflamed by Cleon’s rhetoric, voted for slaughter. A ship sailed that night with the order. By morning remorse set in; a second ship was launched with reprieve. The two vessels raced across the Aegean, oars flashing. The ship of reason barely arrived first. We might call it the first instance of lag.

Today the vessel of anger is powered by GPUs. “Adapt and win or pearl-clutch and lose,” reads an internal memo from a modern campaign shop. Why wait for a verifiable quote when an AI can fabricate one convincingly? A deepfake is Cleon’s bluntness rendered in pixels, a tactical innovation of synthetic proof. The pixels flicker slightly, as if the lie itself were breathing. During a recent congressional primary, an AI-generated confession spread through encrypted chats before breakfast; by noon, the correction was invisible under the debris of retweets. Speed wins. Fact-checking is nostalgia.

Cleon’s attack on elites made him irresistible. He cast refinement as fraud, intellect as betrayal. “They dress in purple,” he sneered, “and speak in riddles.” Authenticity became performance; performance, the brand. The new Cleon lives in a warehouse studio surrounded by ring lights and dashboards. He calls himself Leo K., host of The Agora Channel. The room itself feels like a secular chapel of outrage—walls humming, screens flickering. The machine doesn’t sweat, doesn’t blink. It translates heat into metrics and metrics into marching orders. An AI voice whispers sentiment scores into his ear. He doesn’t edit; he adjusts. Each outrage is A/B-tested in real time. His analytics scroll like scripture: engagement per minute, sentiment delta, outrage index. His AI team feeds the system new provocations to test. Rural viewers see forgotten farmers; suburban ones see “woke schools.” When his video “They Talk While You Bleed” hits ten million views, Leo K. doesn’t smile. He refreshes the dashboard. Cleon shouted. The crowd obeyed. Leo posted. The crowd clicked.

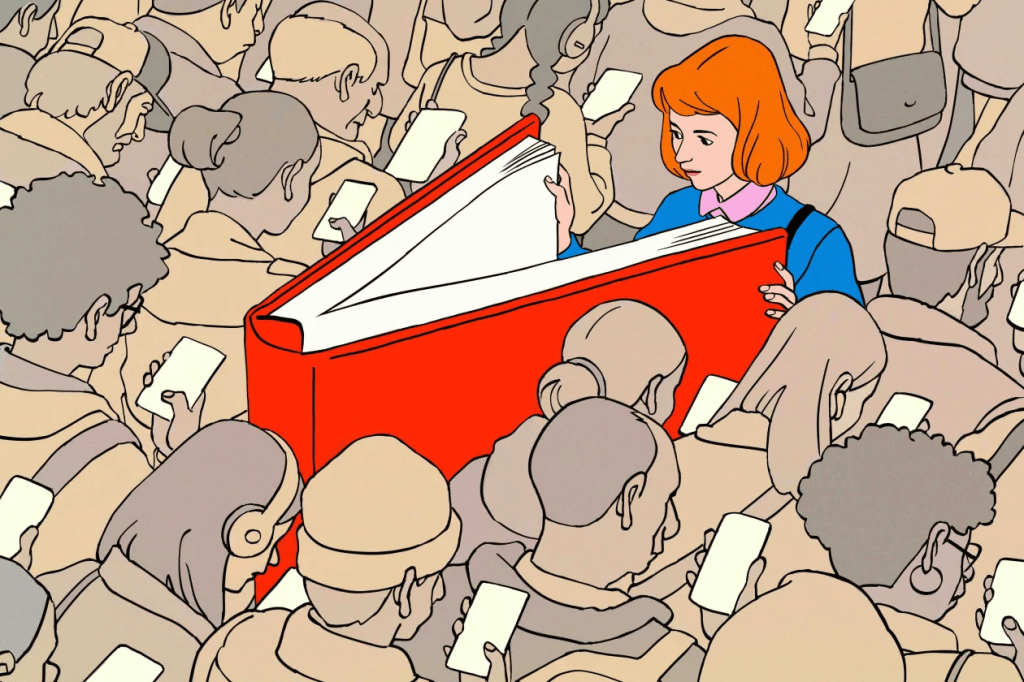

Meanwhile, the opposition labors under its own conscientiousness. Where one side treats AI as a tactical advantage, the other treats it as a moral hazard. The Democratic instinct remains deliberative: form a task force, issue a six-point memo, hold an AI 101 training. They build models to optimize voter files, diversity audits, and fundraising efficiency—work that improves governance but never goes viral. They’re still formatting the memo while the meme metastasizes. They are trying to construct a more accountable civic algorithm while their opponents exploit the existing one to dismantle civics itself. Technology moves at the speed of the most audacious user, not the most virtuous.

The penalty for slowness has consumed even those who once mastered it. The Republican Party that learned to weaponize velocity was once the party of patience. Its old guardians—Howard Baker, Bob Dole, and before them Abraham Lincoln—believed that democracy endured only through slowness: through listening, through compromise, through the humility to doubt one’s own righteousness.

Baker was called The Great Conciliator, though what he practiced was something rarer: slow thought. He listened more than he spoke. His Watergate question—“What did the President know, and when did he know it?”—was not theater but procedure, the careful calibration of truth before judgment. Baker’s deliberation depended on the existence of a stable document—minutes, transcripts, the slow paper trail that anchored reality. But the modern ecosystem runs on disposability. It generates synthetic records faster than any investigator could verify. There is nothing to subpoena, only content that vanishes after impact. Baker’s silences disarmed opponents; his patience made time a weapon. “The essence of leadership,” he said, “is not command, but consensus.” It was a creed for a republic that still believed deliberation was a form of courage.

Bob Dole was his equal in patience, though drier in tone. Scarred from war, tempered by decades in the Senate, he distrusted purity and spectacle. He measured success by text, not applause. He supported the Americans with Disabilities Act, expanded food aid, negotiated budgets with Democrats. His pauses were political instruments; his sarcasm, a lubricant for compromise. “Compromise,” he said, “is not surrender. It’s the essence of democracy.” He wrote laws instead of posts. He joked his way through stalemates, turning irony into a form of grace. He would be unelectable now. The algorithm has no metric for patience, no reward for irony.

The Senate, for Dole and Baker, was an architecture of time. Every rule, every recess, every filibuster was a mechanism for patience. Time was currency. Now time is waste. The hearing room once built consensus; today it builds clips. Dole’s humor was irony, a form of restraint the algorithm can’t parse—it depends on context and delay. Baker’s strength was the paper trail; the machine specializes in deletion. Their virtues—documentation, wit, patience—cannot be rendered in code.

And then there was Lincoln, the slowest genius in American history, a man who believed that words could cool a nation’s blood. His sentences moved with geological patience: clause folding into clause, thought delaying conclusion until understanding arrived. “I am slow to learn,” he confessed, “and slow to forget that which I have learned.” In his world, reflection was leadership. In ours, it’s latency. His sentences resisted compression. They were long enough to make the reader breathe differently. Each clause deferred judgment until understanding arrived—a syntax designed for moral digestion. The algorithm, if handed the Gettysburg Address, would discard its middle clauses, highlight the opening for brevity, and tag the closing for virality. It would miss entirely the hesitation—the part that transforms rhetoric into conscience.

The republic of Lincoln has been replaced by the republic of refresh. The party of Lincoln has been replaced by the platform of latency: always responding, never reflecting. The Great Compromisers have given way to the Great Amplifiers. The virtues that once defined republican governance—discipline, empathy, institutional humility—are now algorithmically invisible. The feed rewards provocation, not patience. Consensus cannot trend.

Caesar understood the conversion of speed into power long before the machines. His dispatches from Gaul were press releases disguised as history, written in the calm third person to give propaganda the tone of inevitability. By the time the Senate gathered to debate his actions, public opinion was already conquered. Procedure could not restrain velocity. When he crossed the Rubicon, they were still writing memos. Celeritas—speed—was his doctrine, and the Republic never recovered.

Augustus learned the next lesson: velocity means nothing without permanence. “I found Rome a city of brick,” he said, “and left it a city of marble.” The marble was propaganda you could touch—forums and temples as stone deepfakes of civic virtue. His Res Gestae proclaimed him restorer of the Republic even as he erased it. Cleon disrupted. Caesar exploited. Augustus consolidated. If Augustus’s monuments were the hardware of empire, our data centers are its cloud: permanent, unseen, self-repairing. The pattern persists—outrage, optimization, control.

Every medium has democratized passion before truth. The printing press multiplied Luther’s fury, pamphlets inflamed the Revolution, radio industrialized empathy for tyrants. Artificial intelligence perfects the sequence by producing emotion on demand. It learns our triggers as Cleon learned his crowd, adjusting the pitch until belief becomes reflex. The crowd’s roar has become quantifiable—engagement metrics as moral barometers. The machine’s innovation is not persuasion but exhaustion. The citizens it governs are too tired to deliberate. The algorithm doesn’t care. It calculates.

Still, there are always philosophers of delay. Socrates practiced slowness as civic discipline. Cicero defended the Republic with essays while Caesar’s legions advanced. A modern startup once tried to revive them in code—SocrAI, a chatbot designed to ask questions, to doubt. It failed. Engagement was low; investors withdrew. The philosophers of pause cannot survive in the economy of speed.

Yet some still try. A quiet digital space called The Stoa refuses ranking and metrics. Posts appear in chronological order, unboosted, unfiltered. It rewards patience, not virality. The users joke that they’re “rowing the slow ship.” Perhaps that is how reason persists: quietly, inefficiently, against the current.

The Algorithmic Republic waits just ahead. Polling is obsolete; sentiment analysis updates in real time. Legislators boast about their “Responsiveness Index.” Justice Algorithm 3.1 recommends a twelve percent increase in sentencing severity for property crimes after last week’s outrage spike. A senator brags that his approval latency is under four minutes. A citizen receives a push notification announcing that a bill has passed—drafted, voted on, and enacted entirely by trending emotion. Debate is redundant; policy flows from mood. Speed has replaced consent. A mayor, asked about a controversial bylaw, shrugs: “We used to hold hearings. Now we hold polls.”

To row the slow ship is not simply to remember—it is to resist. The virtues of Dole’s humor and Baker’s patience were not ornamental; they were mechanical, designed to keep the republic from capsizing under its own speed. The challenge now is not finding the truth but making it audible in an environment where tempo masquerades as conviction. The algorithm has taught us that the fastest message wins, even when it’s wrong.

The vessel of anger sails endlessly now, while the vessel of reflection waits for bandwidth. The feed never sleeps. The Assembly never adjourns. The machine listens and learns. The virtues of Baker, Dole, and Lincoln—listening, compromise, slowness—are almost impossible to code, yet they are the only algorithms that ever preserved a republic. They built democracy through delay.

Cleon shouted. The crowd obeyed. Leo posted. The crowd clicked. Caesar wrote. The crowd believed. Augustus built. The crowd forgot. The pattern endures because it satisfies a human need: to feel unity through fury. The danger is not that Cleon still shouts too loudly, but that we, in our republic of endless listening, have forgotten how to pause.

Perhaps the measure of a civilization is not how fast it speaks, but how long it listens. Somewhere between the hum of the servers and the silence of the sea, the slow ship still sails—late again, but not yet lost.

THIS ESSAY WAS WRITTEN AND EDITED UTILIZING AI